- #How to install apache spark windows how to

- #How to install apache spark windows 32 bit

- #How to install apache spark windows code

- #How to install apache spark windows license

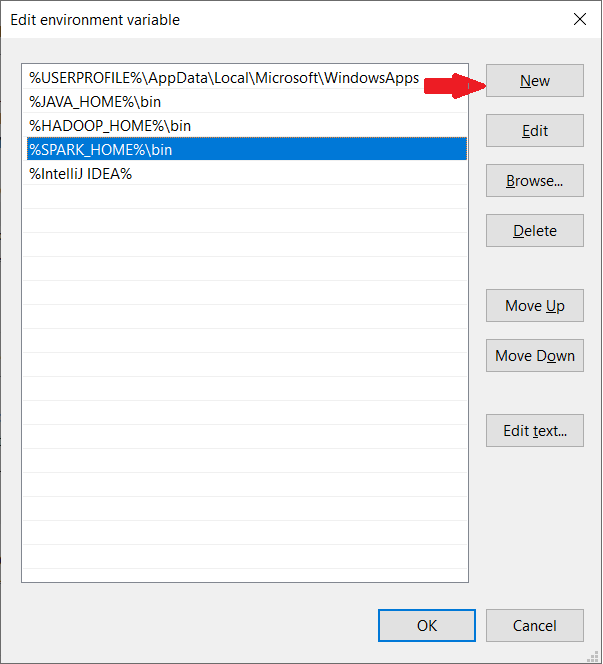

In my case, I created a folder called spark on my C drive and extracted the zipped tarball in a folder called spark-1.6.2-bin-hadoop2.6. Make sure that the folder path and the folder name containing Spark files do not contain any spaces. You can extract the files from the downloaded tarball in any folder of your choice using the 7Zip tool. In order to install Apache Spark, there is no need to run any installer. tgz extension such as spark-1.6.2-bin-hadoop2.6.tgz. Installing Apache Sparkįor Choose a Spark release, select the latest stable release of Spark.įor Choose a package type, select a version that is pre-built for the latest version of Hadoop such as Pre-built for Hadoop 2.6.įor Choose a download type, select Direct Download.Ĭlick the link next to Download Spark to download a zipped tarball file ending in. If this option is not selected, some of the PySpark utilities such as pyspark and spark-submit might not work.Īfter the installation is complete, close the Command Prompt if it was already open, open it and check if you can successfully run python -version command. When you run the installer, on the Customize Python section, make sure that the option Add python.exe to Path is selected.

#How to install apache spark windows 32 bit

If you are using a 32 bit version of Windows download the Windows x86 MSI installer file. To do so,ĭownload the Windows x86-64 MSI installer file.

'python' is not recognized as an internal or external command, operable program or batch file. To check if Python is available and find it’s version, open a Command Prompt and type the following command. So it is quite possible that a required version (in our case version 2.6 or later) is already available on your computer. I suggest getting the exe for Windows 圆4 (such as jre-8u92-windows-圆4.exe) unless you are using a 32 bit version of Windows in which case you need to get the Windows x86 Offline version.Īfter the installation is complete, close the Command Prompt if it was already open, open it and check if you can successfully run java -version command.

#How to install apache spark windows license

In case the download link has changed, search for Java SE Runtime Environment on the internet and you should be able to find the download page.Īccept the license agreement and download the latest version of Java SE Runtime Environment installer. 'java' is not recognized as an internal or external command, operable program or batch file. To check if Java is available and find it’s version, open a Command Prompt and type the following command. So it is quite possible that a required version (in our case version 7 or later) is already available on your computer. Let’s first check if they are already installed or install them and make sure that PySpark can work with these two components. PySpark requires Java version 7 or later and Python version 2.6 or later. The official Spark documentation does mention about supporting Windows. So I had to first figure out if Spark and PySpark would work well on Windows. Often times, many open source projects do not have good Windows support. In case you need a refresher, a quick introduction might be handy.

#How to install apache spark windows how to

You do not have to be an expert, but you need to know how to start a Command Prompt and run commands such as those that help you move around your computer’s file system. I am also assuming that you are comfortable working with the Command Prompt on Windows.

So the screenshots are specific to Windows 10. In this post, I describe how I got started with PySpark on Windows. Spark supports a Python programming API called PySpark that is actively maintained and was enough to convince me to start learning PySpark for working with big data.

#How to install apache spark windows code

While I had heard of Apache Hadoop, to use Hadoop for working with big data, I had to write code in Java which I was not really looking forward to as I love to write code in Python. I decided to teach myself how to work with big data and came across Apache Spark.